San Antonio Review is a small publisher that primarily publishes on digital channels, though it also occasionally publishes in print. We talk about our work as an experiment in prefigurative politics—the practice of building institutions today that embody the values of the world we hope to see tomorrow.

What does this mean in practice?

We rely on a very lean staff and a team of volunteers who work collaboratively and transparently, modeling the spirit of shared responsibility and mutual care. Funding comes not from advertisers or large institutions, but from grants, donations, submission fees and the sale of issues, books, and merchandise. This independence safeguards our editorial freedom, allowing us to prioritize integrity, experimentation and inclusivity over profit or prestige.

This reality means we deal with a high volume of work relative to the small team that we have, and face intense publication pressure with tight deadlines. We need tools that give contributors insight into the editorial process, remove friction in publishing, and ensure we aren’t undermining what makes San Antonio Review worth reading.

The central tension we face is how to publish faster without compromising our integrity as a publication.

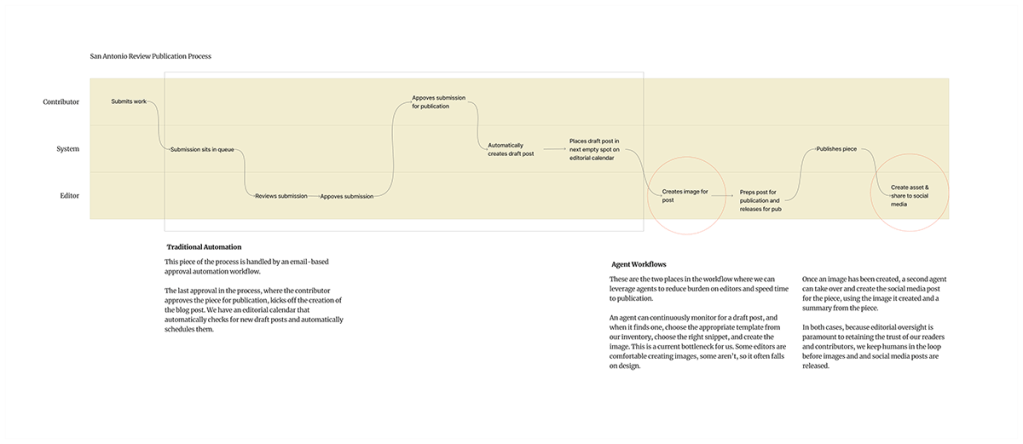

SAN ANTONIO REVIEW EDITORIAL PROCESS

Our submission and approval process is partially automated, but production work — creating images and publishing to social media — still falls to editors and creates the real bottleneck.

The questions are not what AI can do and how much I can automate the process, but whose trust would be affected, and at what cost? Editorial judgment is foundational to our work at San Antonio Review. It is the operational bedrock of publishing. We have two trust relationships to consider: contributors, whose work must be reviewed and evaluated by humans, and readers, who expect a viewpoint and experience that can only be provided by us, the humans who publish the journal.

With these considerations in mind, I discarded some AI-enabled process enhancement ideas precisely because they could have violated that trust. Editors don’t review submissions on a set schedule, so sometimes they can develop a substantial backlog. I considered creating an agent that would read incoming submissions and rate them against a set of editorial standards and criteria pulled from previously published works. A “good piece” that the agent could evaluate a new submission against and determine where an editor might rank it. This might make it easier for editors to triage what to read first when facing a large backlog; however, it also violates contributors’ trust in us. Every submitter expects a fair shake from an editor. Anything less is violating the implied contract we have with them.